Over the past two days I have hosted the 3rd CRI/Sanger NGS workshop at CRI. I hosted the first of these in 2009 and the demand for information about NGS technologies and applications appears to be greater today than it was back then. We had just under 150 people attend, and had sessions on RNA-seq, Exomes and Amplicons as well as breakouts covering library prep, bioinformatics, exomes and amplicons. Our keynote speaker was Jan Korbel from EMBL.

I wanted to record my notes from the meeting as a summary of what we did for people to refer back to. I’d also hope to encourage you to arrange similar meetings in your institutes. Bringing people together to talk about technologies is an incredibly powerful way to get new collaborations going and learn about the latest methods.

Let me know if you organise a meeting yourself.

I will follow up this post with some things I learnt about organising a scientific meeting. Hopefully this will help you with yours.

3rd CRI/Sanger NGS workshop notes:

RNA-seq session:

Mike Gilchrist: from NIMR presented on his groups work in Xenopus development. He strongly suggested that we should all be doing careful experimental design and consider that variation within biological groups needs to be understood as methodological and analytical biases are still present in modern methods. mRNA-seq is biased due to transcript length, library prep and sample collection, which needs to be very carefully controlled (SOPs and record everything).

Karim Sorefan: from UEA presented a fantastic demonstration of the biases in smallRNA sequencing. They used a degenerate 9nt oligo with 250,000 possible sequences as the input to a library prep and saw heavy bias (up to 20,000 reads from some sequences, where only 15 expected, similar issues with a larger 21nt 1014 sequences) mainly coming from RNA-ligation bias. smallRNAs are heavily biased due to RNA-ligase sequence preferences, some RNA-ligase bias comes from the impact of 20 structure on ligation (there are other factors as well). He presented a modified adapter approach where the 4bp at the 3’ or 5’ ends of the adapters are degenerate, and very significantly improved bias to almost the theoretical optimum.

Neil Ward: from Illumina talked about advances in Illumina library preparation and the Nextera workflow, which is rapid (90 mins) and requires vanishingly small quantities of DNA (50ng) without any shearing and uses a dual-indexing approach to allow 96 indexes from just 20 PCR primers (8F and 12R, possibly scalable to 384 with just 40 primers). Illumina provide a tool called “Experiment Manager†for handling of sample data to make sure the sequencing run can be demultiplexed bioinformatically. Nextera does have some bias (as with all methods) but is very comparable to other methods. He showed some amplicon resequencing application (drop off of the ends of PCR products at about 50bp), with good coverage uniformity and demonstrated the ability to detect causative mutations.

Exome session:

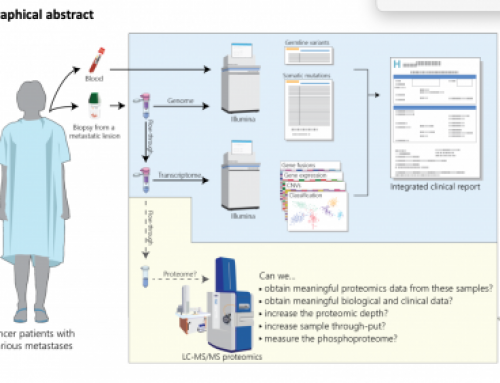

Patrick Tarpey: Working in the Cancer Genome Project team at Sanger was talking about his work on Exomes sequencing. Refreshed everyone on how mutations can be acquired somatically over time, that there are differences between Drivers and Passengers and that exome sequencing can help to detect drivers where mutations are recurrent e.g. SFB31. They are assaying substitutions, amplifications and small InDels (rearrangements and epigenetic not currently assayable). He presented a BrCa ER+/- T:N paired exome analysis using Agilent SureSelect on HiSeq with Caveman (substitutions) and Pindel (InDel) analysis. About 1/3rd of data is PCR duplicates or off-target so they are currently aiming for 10Gb with 5-6Gb on-target for analysis, ~80% bp at 30x coverage. Validation is an issue does it need to be orthogonal or not? So far they have identified about 7000 somatic mutation in the BrCa screen (averaging around 10 indels and 50 substitutions) however some mutator phenotype samples have very large numbers of substitutions (50-200). An interesting observation was that TpC (Thymine precedes Cytosine) dinucleotides are prone to C>T/C/A substitutions (why is currently unknown). They found 9 novel cancer genes including two oncogenes (AKT2 & TBX3). And noted that many of the mutated genes in the BrCa screen abrogate JUN kinase signalling (defective in about 50% of BrCa in the study).

David Buck: Oxford Genomics Centre, spoke about their comparison of exome technologies (Agilent vs Illumina). His is a relatively large lab (5 HiSeqs) with automated library prep on Biomek FX. He talked about how exomes can be useful but have limitations (SV-seq is not possible, not clear about impact on complex disease analysis). They did not see a lot of difference in the exome products and he talked about the arms race between Agilent and Illumina. They recently encountered issues in a 650 exome project and saw drop-out of GC rich regions, almost certainly due to a wrong pH in NaOH from acidification during shipment. They had seen issues with SureSelect as well. All kits can go wrong make sure you run careful QC of the data before passing it through to a secondary analysis pipeline.

Paul Coupland (AGBT poster link): Talked about advances in genome sequencing. He discussed nanopores and some of the issues with them, focusing on translocation speed. And there was some discussion about whether we would realy get to 50x genome in 15 minsSMRT-cell sequencing. Briefly they are blunt-end ligating DNA to SMRT-bell adapters to create circular molecules for sequencing, read lengths are up to 5kb but made of sub-reads (single pass reads) averaging 1-2kb, 15% error rate on single pass sequencing but <1% on consensus called sequence, vast majority of errors are insertions, variability is often down to library prep rather than sequencing, improved library prep methods are needed. He completed a P falciparum genome project in 5 days DNA on 21 SMRT-cells for 80x genome coverage, long reads are really helpful in genome assembly. Paul also also mentioned the Ion Torrent technology as well as 454, Visigen, Bionanomatrix, Visigen, GnuBio, GeniaChip, etc.

Amplicons and clinical sequencing:

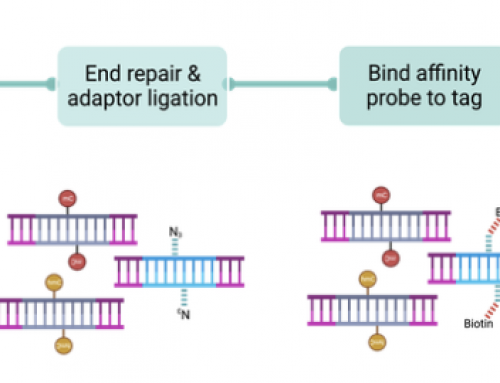

Tim Forshew: Tim is a post-doc in Nitzan Rosenfeld’s group here at CRI. He is working on analysis of circulating tumour DNA (ctDNA) and its utility for tumour monitoring in patients via blood. ctDNA is dilute, heavily fragmented and the fraction of ctDNA in plasma is very low. The group are developing a method called “TAM-seq” which uses locus-specific primers tailed with short tag sequences, followed by a secondary PCR from these to add NGS adapters and patient specific barcodes. They are pre-amplifying a pool of targets, then using Fluidigm to allow single-plex PCR for specificity and generating 2304 PCRs on one AccessArray. PCR products are recovered for secondary PCR and the sequenced. Till now they have sequenced 47 OvCa (TP53, PTEN, EGFR*, PIK3CA*, KRAS*, BRAF* *hotspots only), with amplicons of 150-200bp (good for FFPE), from 30M reads on GAIIx for 96 samples they achieve >3000x coverage. Tim presented an experiment testing the limits of sensitivity using a series of known SNPs in five individuals mixed to produce a sample with 2-500 copies per SNP and they looked at allele frequency for quantitation. Sanger-sequencing confirmation of specific TP53 mutations was followed-up by digital PCR to showed these were detectable at low frequency in the pool. Fluidigm NGS found all TP53 mutations and additionally detected an unknown EGFR mutation present at 6% frequency in the patient, this mutation was not present in the initial biopsy of the right ovary. Tim performed some “digital Sanger sequencing” to confirm EGFR mutations, and looking in more detail the biopsies of Omentum showed the mutation whilst it was missing in both ovaries. The cost of the assay is around $25 per sample for duplicate library prep and sequencing of clinical samples, they can find mutations as low as 2% (probably lower as sequencing depth and technology gets better), and are now looking at the dynamics of circulating tumour DNA. TAM-seq and digital PCR are useful monitoring tools and can detect response or relapse weeks or even months earlier than current methods, it is also possible to track multiple mutations in a patient as complex-biomarker assays probably allowing better analysis of tumour heterogeneity and evolution during treatment. He summarised this as a personalised liquid biopsy!

Christohper Watson: Chris works at St James’s clinical molecular genetics lab which runs a series of NGS clinical tests biweekly to meet turn-around-time (TAT), so far they have issued over 1500 NGS based reports. There’s was the first lab in the UK to move to NGS testing, see Morgan et al paper in Human Mutation. They perform triplicate lrPCR amplification for each test and pool before shearing (Covaris) for a single library prep (Beckman SPRIworks) for Illumina NGS, aiming for reports to be automated as much as possible, still doing Sanger sequencing where not enough individuals to warrant NGS approaches, lrPCRs are pooled for library prep by shearing (Covaris) and standard Illumina library prep on Beckman SPRIworks robot (moving to Nextera), they run SE100bp sequencing reads (50x coverage) and are using NextGENe from SoftGenetics for data analysis that gives mutation calls in standard nomenclature. Currently all variants are confirmed by Sanger-seq (100% concordance) but this is under review as the quality of NGS may make this unnecessary and other NGS platforms may be used instead. The lab have seen a 40% reduction in costs and 50% reduction in hands on time their process is CPA accredited. They see clinicians moving from sequential analysis of genes to testing all at once improving patient care and now 50% of the workload at Leeds is NGS. Test panels cost £530 each per patient. Chirs discussed some challenges (staff retraining, lrPCR design, bioinformatics, maximising run capacity), and they are probably moving to exomes or mid-range panels, the “medical exome”. On thing Chris mentioned is that in the UK TAT for BRCA is required to be under 40days, which was a lot longer than I thought.

Howard Martin: Is a clinical scientist working at EASIH at Addenbrokes Regional genetics lab where they are still doing most clinical work with Sanger-seq offered on a per gene basis which can take many months to get to a clinically actionable outcome. They initially used 454 but this has fallen very much out of favour, Howard has led the testing and introduction of the Ion Torrent platform, he had the first PGM in the UK and likes it for a number of reasons; very flexible, fast run time, cheap to run, scalable run formats, etc. They are getting 60Mb from 314 chips. They have seen all the improvements promised by Ion Torrent and are waiting for 400bp reads. He presented an HLA typing project for bone marrow transplantation where they need to decode 1000 genotypes for the DRB1 locus. He is also doing HIV sequencing to identify sub-populations in individuals from a 4kb lrPCR. They are also concatenating Sanger PCRs as the input to fragment library preps to make use of the up-stream sample prep workflows for Sanger-seq, costs are good data quality is good with a very fast TAT.

Graeme Black: Graeme is a clinician at the St Mary’s hospital in Manchester. He is trying to work out the best way to solve the clinical problem rather than looking specifically at NGS technologies. He needs to solve a problem now in deciphering genetic heterogeneity. The NGS is done in clinically accredited labs. He is working primarily on retinal dystrophies that are highly heterogeneous conditions involvinglem now in deciphering genetic heterogeneity. The NGS is done in clinically accredited labs. He is working primarily on retinal dystrophies that are highly heterogeneous conditions involving around 200 genes. With Sanger-based methods clinicians need to decide which tests to run as many patients are phenotypically identical, they cherry-pick a few genes for Sanger sequencing but only get about 45% success rates. The FDA looking to accredit gene therapies for retinal disorders already. Graeme would like success rates on tests to be much higher, complex testing should be possible and universally applied to removes inequality of access (~20% patients get tested). They are now offering a 105 gene test (Agilent SureSelect on 5500 SOLiD) and Graeme presented data from 50 patients. A big issue is that the NHS finds it really difficult to keep up with the rapid change in technology and information, the NGS lab has doubled the computing capacity of the whole NHS trust!!!. They have pipelined the analysis to allow variant calling and validation for report writing and are finding 5-10 variants per patient, results from the 50 patients presented showed 22 highly pathogenic mutations and they were able to report back valuable information to patients. He is thinking very hard about the reports that go back to clinicians as carrier status is likely to be important. 8/16 previously negative patients got actionable results and he saw a 60-65% success rate from the NGS test. Current single-gene Sanger £400, complex 105 gene NGS cost £900, NHS spends more but patients get better results www.mangen.co.uk.

Bioinformatics for NGS:

Guy Cochrane, Gord Brown, Simon Andrews, John Marioni: This was one of the breakout sessions where we had very short presentations from a panel followed by 40 minutes discussion. Guy: Is a group leader at the EBI who are the major provider of Bioinformatics services in Europe; databases, web tools, genomes, expression, proteins etc, etc, etc. He spoke about the CRAM project to compress genome data currently we use 15-20 bits per base, CRAM in lossless is 3-4 b/b and it should be possible to get much lower with tools like reference based compression. Gord: Is a staff bioinformatician in Jason Carroll’s research group at CRI. He spoke about replication in NGS experiments and its absolute necessity. Why have we seemingly forgotten the lessons learnt with micorarrrays? Careful experimental design and replication are required to get statistically meaningful data from experiments. Biological variability needs to be understood even if technical variability is low. Gord showed a knock-down experiment with singletons (looked OK) but replication showed how unreliable the initial data was. Reasons why people don’t replicate; cost, “if you can’t afford to do good science is it OK to do cheap bad science?â€, sample collection issues, time, etc. John: Is a group leader at the EBI who is working on RNA-seq. He talked about the fact that it is almost impossible to give prescribed guidelines on analysis, the approach depends on your question, samples, experiment, etc. Things are changing a lot. Sequencing one sample at high depth is probably not as good as sequencing more replicates at lower depth (with similar experimental costs). There are many read mapping tools (genome, transcriptome fast but you could miss stuff, tools, de novo gene models 60 tools at last count) but it is difficult to choose a “best†method. Many are using RPKM for normalisation but it is still not clear how best to normalise and quantify. There is a lack of an up-to-date gold standard data set (look out for SEQC). john thought that differential expression detection does seem to be a reasonably solved problem, but more effort is needed for calling SNPs in the context of allele-specific expression. He pointed out that there is no VCF-like format for RNA-seq data that might be useful to store variation data in RNA-seq experiments. Simon: Is a bioinformatician at the Babraham Institute and wrote the FastQC package. It should be clear that we need to QC data before we put effort into downstream analysis! Sequencers produce some run QC but this may not be very useful for your samples. Library QC is also still important. Why bother? You can verify your data is high-quality, not contaminated and not (overly) biased. This analysis is also an opportunity to think about what you can do to improve any issues before starting the real analysis. You can also discard data, painful though that may be! Your provider should give you a QC report. Look at Q-scores, sequence composition (GC, RNA-seq has a bias in the first 7-10 nucleotides), trim off adapters, check species, duplication rates, aberrant reads, (FastQC, PrinSeq,QRQC, FastX, RNA-seq QC FastQScreen, TrimGalore).

An issue that was discussed in this session was “barcode-bleeding” where barcodes appear to switch between samples, not sure what is going on, are we confident that barcoding has well understood biases?

Our Keynote Presentation:

Jan Korbel: Jan is a groupl leader at the EMBL lab in Heidelberg he was previously in Mike Snyders group in Yale where he published one of the landmark papers in Human genomic structural variation. Jans talked about phenotypic impact of SV in human disease, rare pathogenic SVs in very small numbers of people e.g. Downs muscular dystrophy, common SVs in common traits psoriasis cancer and cancer-specific somatic SVs e.g. PrCa TMPRSS2/ERG, leukaemia BCR/ABL.

His groups interest is deciphering what SVs are doing in Human disease. Using SV mapping from NGS data Korebel et al 2007 Science They have developed computational approaches to improve the quality of SV detection, read-depth, split-reads, assembly. He is now leading the 1000 genomes SV group. They produced the first population level map of SVs and looked at the functional impact in GX data. A strong interest is in trying to elucidate the biological mechanisms behind SV formation in the Human genome, non-allelic homologous recombination (NAHR) 23%, mobile element insertion (MEI) 24%, variable number of simple tandem repeats (VNTR) 5%, non homologous rearrangement (NH, NHEJ) 48% (%ages from 1000 genomes data). Some regions of the genome appear to be SV hotpsots and are often close to telomeric and centromeric ends.

Cancer is a disease of the genome: it is often very easy to see lots of SV in karyotopes, every cancer is different, but what is causal or consequential? The Korbel group are working on the ICGC PedBrain project; in medulloblastoma there are only about 1-17 SNVs per patient with very poor prognosis. He presented their work on the discovery of “circular†(to be proven) double minute chromosomes. In teir first patient thay have confirmed all inter-chromosomal connections by PCR as present in the Tumour only. In a 2nd patient chr15 was massively amplified and rearranged at same time Stephens et al at the Sanger Institute described “chromothripsis†in 2-3% of Cancers. Jan asked the question “is the TP53 mutation in li fraumeni syndrome driving Chromothripsis?†They used Affy SNP6 and TP53 sequencing to look and saw a clear link between SHH and TP53 mutation in Chromothripsis in medullobastoma. The data is suggestive that chromothripsis is an early, possibly initiating event in some medulloblastomas and these cancers do not follow the textbook progressive accumulation of mutations model of cancer development and that it is primarily driven by NHEJ.

TP53 status is actionable and surveillance of patients with MRI, mammography increases survival and personalised treatments may be required as exposure to radiotherapy, etc could be devastating to these patients.

A great blog post James!

I've been working at the Nowgen Centre, Manchester (the same site/hospital as Professor Black's group) as part of the their Professional Training Team for over a year. We develop/run/deliver a range of courses, primarily for the NHS and clinical sector, covering bioinformatics, NGS, cancer and stratified medicine – http://www.nowgen.org.uk

We have a "Fundamentals of NGS" event running in June (the third time we have done so). One of the greatest challenges we face satisfying the varied demands of our delegates. As NGS is now impacting on so many areas of biology we are seeing more representation from outside NHS and academic human research.

The rapid pace of technology development is also an exciting yet difficult hurdle to tackle, especially for the large number of people who are only just arriving into the NGS scene!

Look forward to hearing more of your thoughts.

Tom

Great post, thanks!

One small thing: I'm pretty sure Paul Coupland talked about PacBio, not nanopores…

Sounds like you had a great meeting, James! Did you set up a website (or at least a dedicated page) for it? We'd love to add any such future meetings you have to our 'NGS Conferences' list (http://blueseq.com/knowledgebank/ngs-conferences-and-meetings/)